On December 19, 2017, federal officials ended a moratorium imposed three years ago on funding research that alters germs to make them more lethal, writes Donald McNeil. In an article published for the New York Times, McNeil explains that while critics caution that this research risks creating a “monster germ,” others argue that it could yield clues to understanding viral mutations or provide insight into better vaccine development.

Dr. Francis S. Collins, the head of the National Institutes of Health (N.I.H.), insists that rigorous safety policies will be in place, that government panels will oversee the research and make sure that proper protocols are followed.

However, despite Collins’s reassurances, many are quite nervous about this new policy shift—and with good reason. McNeil describes three specific occurrences—one, when the Centers for Disease Control and Prevention (CDC) accidentally exposed lab workers to anthrax; two, when the CDC “shipped a deadly flu virus to a laboratory that asked for a benign strain”; and three, when the N.I.H. found “vials of smallpox” that had been forgotten about—that should be cause for alarm.

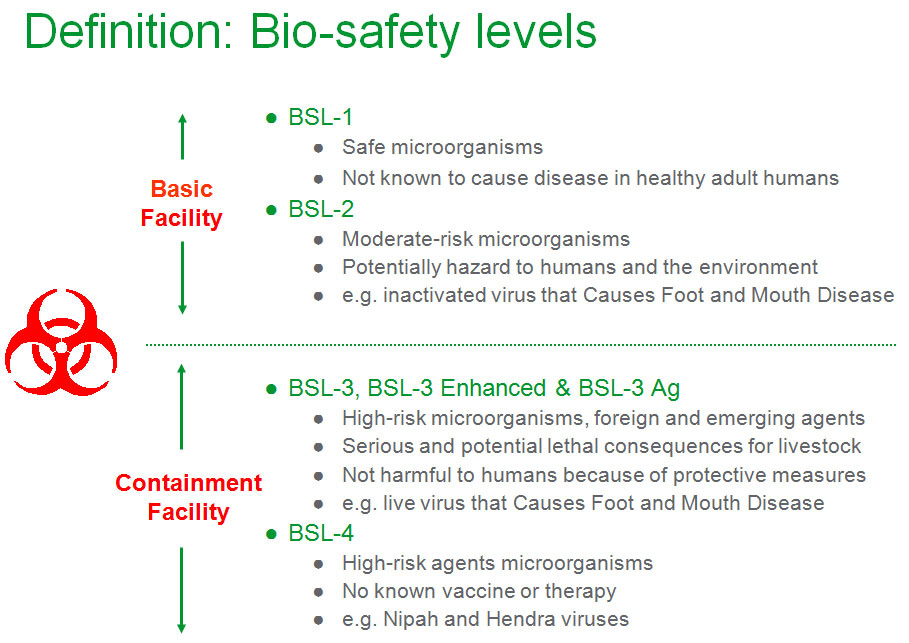

Biosafety levels (BSL) determine the safety precautions required by specific biological agents. These levels can dictate which labs can process which microbes, which protective equipment and protocols must be in place, and how the microbe itself is handled and contained. According to the CDC website, the levels are determined by “infectivity, severity of disease, transmissibility, and the nature of the work conducted,” as well as the origin of the microbe or agent in question and how it is transmitted.

BSL-1 microbes come with the lowest risk, whereas BSL-4 microbes are the most dangerous. A BSL-1 microbe might be a nonpathogenic strain of E. coli, for instance. Nonpathogenic microbes do not cause disease. BSL-2 microbes are dangerous but less easy to transmit. BSL-3 microbes are potentially lethal and can spread via respiratory transmission. This means that they can be spread much like the common cold. Therefore, BSL-3 labs must have sustained directional airflow, and they cannot rely on recirculated air, as the air itself could be contaminated. Entrance to the labs must also be through two sets of self-closing and locking doors, and protective clothing should be discarded or decontaminated after each use. If inhaled, the illness triggered by these BSL-3 viruses can be treated.

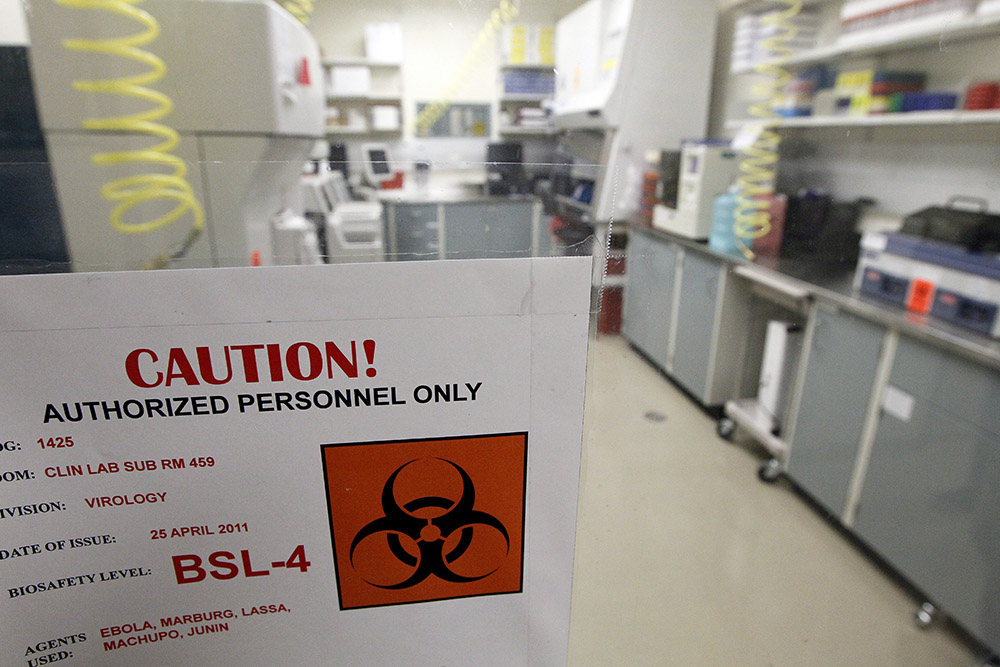

BSL-4 labs deal with the most dangerous and exotic microbes, such as Ebola and Marburg. While traditional anthrax, which can be treated, is considered BSL-3, weaponized anthrax, much like Ebola, is considered BSL-4. These microbes are the most dangerous and lethal, and infections by them are often fatal, with no vaccines or cures.

The severity of these makes it all the more ominous that the occurrences described by McNeil are not the only times when Americans were brought uncomfortably close to dangerous viruses.

Just one week after 9/11, letters containing anthrax spores were mailed to five news media offices and two Democratic senators. Five people died and seventeen were infected. The Federal Bureau of Investigation eventually identified the culprit in 2008 as Bruce Ivins, a scientist in the Army’s biodefense lab at Fort Detrick, in Maryland, although many still feel their investigation was inconclusive. At the time, the fact that the anthrax used in the attacks was traced back to Fort Detrick also intensified questions of whether the greatest danger was already within our borders, possibly in the hands of our own government.

This terrorist attack was not the only time a dangerous virus made it out of the lab. In an article published by USA Today in May 2015, Alison Young and Nick Penzenstadler detail an investigation that revealed not only “hundreds of lab mistakes, safety violations and near-miss incidents” occurring in American biological laboratories, but also shoddy oversight. For instance, Young and Penzenstadler describe an incidence where the Department of Defense sent samples of live anthrax—instead of killed specimens—to labs across the USA, with as many as “18 labs in nine states” receiving the samples.

Especially troublesome is the fact that when research facilities commit egregious safety or security breaches, their names are kept secret, and “the scope of their research and safety records are largely unknown to most state health departments…Even the federal government doesn’t know where they all are.” National standards for the construction of these labs do not exist.

Young and Penzenstadler detail a few other incidents that indicate the kind of mistakes that can (and do) happen. For instance: a 2007 outbreak of foot and mouth disease in England is attributed to leaking drainage pipes at a research complex; a 2009 article in the New England Journal of Medicine links the 1977 H1N1 flu outbreak to “an accidental release from a laboratory”; a researcher in Wisconsin was quarantined for seven days in 2013 after a needle stick with a version of the same H5N1 influenza virus; and a lab worker in Colorado failed to ensure specimens of the deadly bacterium Burkholderia pseudomallei had been killed before shipping them in May 2014 to a co-worker in a lower-level lab who handled them without critical protective gear; while Tulane will spend the next few years testing wildlife around its National Primate Research Center to make sure bacteria that got out of its BSL-3 labs has not contaminated the environment.

There are countless stories such as these—and there are even more that we do not know about. Viral outbreaks are a staple in contemporary American film and television. Various thematic tropes in films such as Outbreak (Wolfgang Petersen, 1995), 28 Days Later (Danny Boyle, 2002) and Contagion (Steven Soderbergh, 2011), as well as television shows like Person of Interest (CBS, 2011-2016), The Blacklist (NBC, 2013-present), and The Last Ship (TNT, 2014-present) that inevitably occur include the constant emphasis on making the invisible visible, and, significantly, what I refer to as the “necessary accident.”

Within cinematic narratives, the necessary accident is an essential part of the plot. It supplies dramatic tension and propels the story forward. Quarantines—or the equivalent—inevitably fail. Someone (a zombie, an infected victim, or a terrorist) gets out or something (a zombie, a virus, a bomb) gets in. To illustrate, one of the central conflicts at the heart of the TV movie Pandemic (Hallmark, 2007) is the business man who defies quarantine, slipping out of the carefully contained area where all the other infected people are being held, because he considers himself too busy and important. His ego results in numerous deaths as he spreads the virus in his wake throughout the city of Los Angeles. The same thing happens in the TV movie Black Death (CBS, 1992), this time with a Congressman who tries to flee town, taking the infection with him. The disease always manages to spread, even to the scientists who are wearing protective apparel. Any attempt at quarantine on a large scale appears meaningless, and scientists are infected regardless of precautionary measures.

Yes, these are fictional narratives. But the outbreak narrative—as a concept—was largely inspired by real-life events, namely the Ebola outbreak at a primate quarantine facility in Reston, Virginia, in 1989. It was Richard Preston’s 1992 New Yorker article “Crisis in the Hot Zone” that launched not only the bestselling nonfiction thriller The Hot Zone: A Terrifying True Story (published by Richard Preston in 1995), but also Outbreak and the made-for-TV movie Virus (also known as Formula for Death), which aired on ABC in May 1995.

The Ebola outbreak of 1989 was particularly terrifying because it featured a strain of Ebola that could be transmitted through the air. Fortunately, the strain only affected monkeys, and so the outbreak was quickly minimalized. However, what the recent move by federal officials to loosen restrictions on BSL-4 research means is that an aerosolized version of Ebola may be back sooner than we think—and this time, we might not be so lucky.

In McNeil’s article, he quotes Richard H. Ebright, a molecular a molecular biologist and bioweapons expert at Rutgers University. Ebright points out that the rules to cover this new research only covers government-funded work, and that clearer minimum safety standards, as well as a mandate that the benefits “outweigh” the risks, instead of merely “justifying them,” are essential.

As all these narratives reiterate (both fictional and real), human error is inevitable. Something will get out just as surely as something always gets in. Is it really worth tempting fate by not only bringing these viruses into our backyards but also by developing even more dangerous ones?

Recent Comments